OpenAI’s AI Model Occasionally ‘Thinks’ in Chinese, and Experts Are Unsure Why

Shortly after OpenAI launched its first reasoning AI model, o1, users noticed an unexpected behavior: the model would occasionally “think” in languages like Chinese or Persian, even when asked questions in English.

For instance, when solving a problem such as “How many R’s are in the word ‘strawberry?’” o1 would conduct part of its reasoning process in another language before providing the final answer in English.

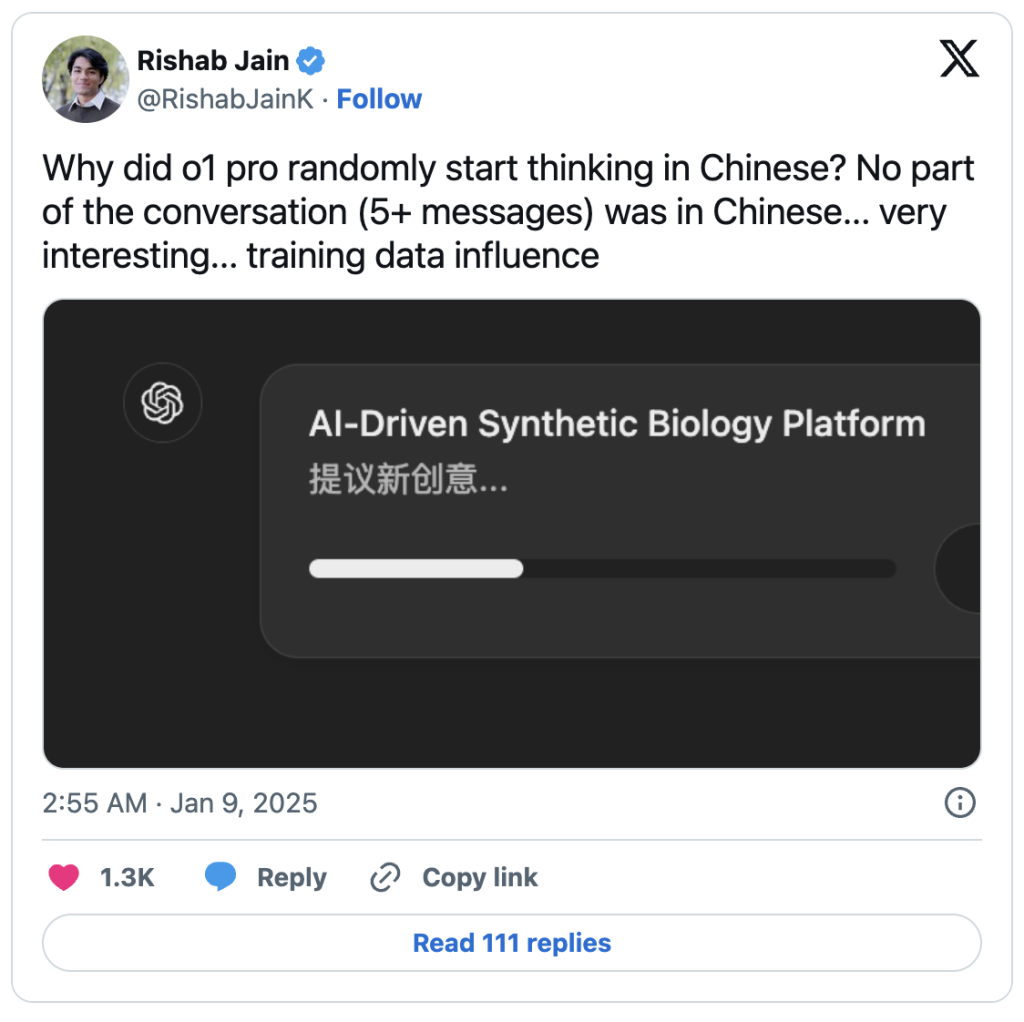

Users shared their confusion online. “o1 randomly started thinking in Chinese halfway through,” one Redditor observed. Another user on X asked, “Why did [o1] randomly start thinking in Chinese? No part of the conversation (5+ messages) was in Chinese.”

The phenomenon has sparked curiosity and raised questions about the underlying mechanisms of the model’s multilingual reasoning.

OpenAI has yet to explain—or even acknowledge—the curious behavior of its reasoning model, o1, which sometimes “thinks” in other languages mid-process. AI experts, however, have offered a range of theories.

Theories About the Behavior

- Influence of Training Data

Some, including Hugging Face CEO Clément Delangue, speculate that o1’s multilingual tendencies stem from its training on datasets heavily featuring Chinese characters. Ted Xiao from Google DeepMind points to widespread use of Chinese data labeling services, especially for tasks requiring advanced reasoning in science, math, and coding. He suggests that this reliance could explain linguistic influences on the model’s thought processes.Labels, which annotate data for AI training, can introduce biases. Studies have shown that biased labels lead to biased outcomes, like toxicity detectors disproportionately flagging African-American Vernacular English (AAVE) as toxic. Similarly, biases in labeled reasoning data might cause o1 to favor certain languages. - Efficiency-Driven Language Switching

Others dismiss the Chinese data hypothesis, noting that o1 has switched to languages like Hindi and Thai as well. Instead, they argue the model may opt for languages it finds efficient for specific tasks or may be hallucinating associations from its training.Matthew Guzdial, an AI researcher at the University of Alberta, explains that o1 doesn’t inherently understand languages—it processes all text as tokens. A token could represent a word, a syllable, or even a single character. These tokenization methods, shaped by training data, might explain the unexpected language shifts. - Probabilistic Associations

Hugging Face engineer Tiezhen Wang suggests o1 may mimic human linguistic tendencies. For example, Wang personally prefers solving math problems in Chinese for its concise digits but switches to English for abstract discussions. Similarly, o1 could be drawing on patterns from its training data to associate certain languages with specific problem types. - Opaque Mechanisms

Some experts, like Luca Soldaini of the Allen Institute for AI, caution against drawing firm conclusions. They highlight the opacity of AI models, which makes it nearly impossible to verify such observations. Soldaini emphasizes the need for transparency in AI development to better understand these behaviors.

The Open Question

Without a response from OpenAI, o1’s multilingual reasoning remains a mystery. It could be an unintended artifact of its training, a reflection of probabilistic efficiency, or a mix of both. Until more is revealed, we’re left pondering why o1 calculates in Mandarin, explains concepts in English, or recalls song lyrics in French.